Deploying Large Language Models (LLMs) at scale presents a classic infrastructure dilemma. Small embedding models sip GPU memory, while 70B+ parameter behemoths demand multiple GPUs. This diversity often leads to chronically low GPU utilization, ballooning compute costs, and unpredictable latency spikes. The core challenge isn't just throwing more hardware at the problem; it's about intelligent orchestration that understands inference workload patterns. Without it, teams are stuck choosing between overprovisioning (wasting resources) and underprovisioning (risking performance).

This post explores how combining NVIDIA NIM for standardized model deployment with NVIDIA Run:ai's advanced scheduling can solve this. We'll break down key strategies like GPU fractions, dynamic memory management, and GPU memory swap, backed by benchmark data showing up to ~2x better GPU utilization and 44-61x faster cold-start latency.

The Power of GPU Fractions and Bin Packing

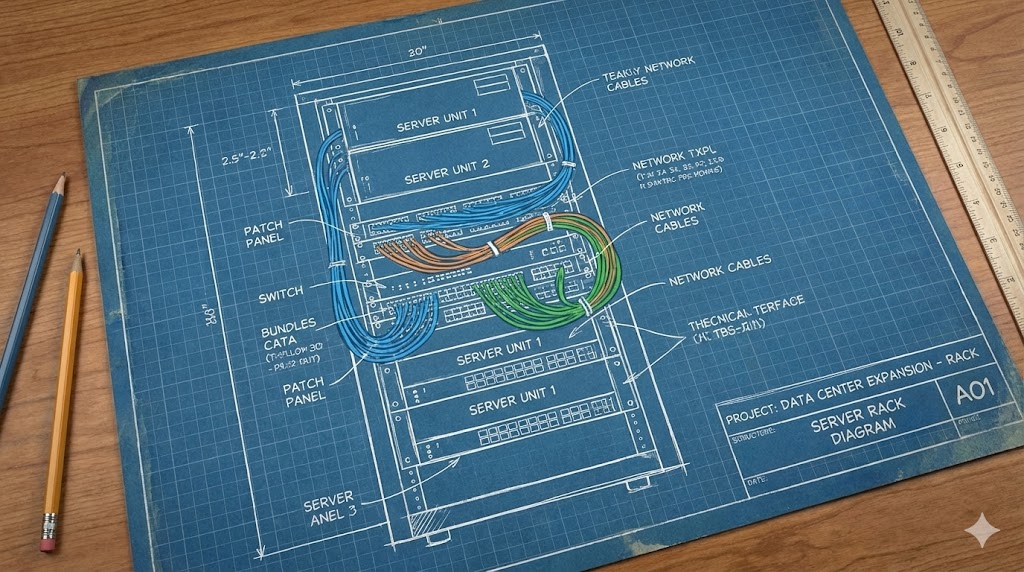

Many inference workloads—like embeddings, rerankers, or smaller LLMs—don't need an entire GPU. Assigning them a full GPU 'just to be safe' is the primary source of waste. NVIDIA Run:ai's GPU fractions provide true memory isolation (not soft limits), allowing multiple NIM microservices to safely share a single GPU.

How Bin Packing Works: The scheduler scores GPUs by their current utilization and prioritizes filling partially used GPUs before allocating new ones. This 'bin packing' strategy maximizes cluster density.

Benchmark Insight: In a test with three NIM models (7B, 12B VLM, 30B MoE) on H100 GPUs, consolidating them onto ≈1.5 GPUs (vs. 3 dedicated GPUs) retained 91–100% of the single-GPU throughput with only modest latency increases. This effectively freed up half the GPU capacity for other workloads.

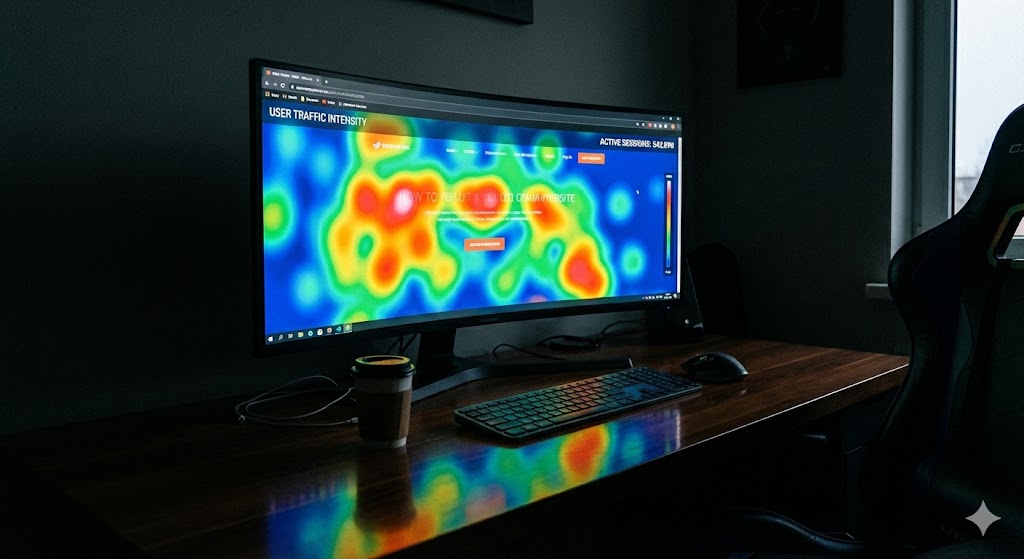

Dynamic GPU Fractions: Handling Traffic Spikes

Static fractions guarantee isolation but create a rigid ceiling. When concurrent requests surge, the model's Key-Value (KV) cache grows. Hitting that fixed memory boundary causes throughput to plateau and latency to spike.

Dynamic fractions solve this using a Kubernetes-inspired request/limit model for GPU memory:

- Request: A guaranteed minimum memory reservation.

- Limit: A burstable upper bound the workload can use when needed.

During traffic spikes, a NIM can 'burst' towards its limit, claiming extra memory to maintain performance, then release it when demand subsides.

Benchmark Results: Compared to static fractions, dynamic fractions delivered:

- Up to 1.4x higher throughput under heavy concurrency.

- Up to 1.7x lower latency (p50). For example, the Nemotron-3-Nano-30B model sustained 1,025 tokens/sec at 256 concurrent requests with dynamic fractions, versus a ceiling of 721 tokens/sec at just 4 requests with static fractions.

GPU Memory Swap: Eliminating the Cold-Start Penalty

Keeping rarely-used models 'always-on' on a GPU guarantees low latency but wastes capacity. Scaling them to zero frees the GPU but introduces a massive cold-start penalty (tens of seconds to load weights from disk).

How GPU Memory Swap Works: Model weights are kept in CPU memory. When a request arrives for an idle model, Run:ai swaps the currently loaded GPU weights to CPU RAM and loads the requested model into GPU memory. This avoids container restarts and disk I/O.

Latency Benchmark (Scale-from-Zero vs. GPU Memory Swap):

| Model | Input Tokens | Cold-Start TTFT | GPU Swap TTFT | Improvement |

|---|---|---|---|---|

| Mistral-7B | 128 | 75.3 s | 1.23 s | 61x |

| Nemotron-3-Nano-30B | 2048 | 180.2 s | 4.02 s | ~44x |

Combined with GPU fractions, swap allows infrequently accessed models to share hardware efficiently without sacrificing responsiveness.

Limitations and Considerations

While powerful, these strategies require careful planning:

- Dynamic Fractions: Best for variable traffic and models with significant KV-cache growth. For predictable, low-concurrency workloads, static fractions may be simpler and sufficient.

- GPU Memory Swap: Involves overhead from moving data between CPU and GPU memory. It's ideal for 'warm' models that are idle but may be needed, not for models that are completely cold for very long periods.

- Orchestration Complexity: Implementing this effectively requires a sophisticated scheduler like Run:ai. Naive sharing without proper isolation leads to Out-Of-Memory (OOM) errors and performance interference. For a broader look at engineering challenges in AI infrastructure, consider this deep dive into context engineering for AI coding agents.

Getting Started and Next Steps

The combination of NVIDIA NIM for standardized, optimized inference and NVIDIA Run:ai for intelligent orchestration represents a significant leap in efficient AI infrastructure. You can start by exploring the practical guide to deploying NIM on Run:ai.

Your Learning Path:

- Experiment with NIM: Deploy a small model using NIM to understand the containerized microservice approach.

- Profile Your Workloads: Measure the memory footprint and concurrency patterns of your inference models to decide between static or dynamic fractions.

- Plan for Heterogeneity: Design your cluster to handle a mix of small and large models, leveraging bin packing.

- Implement Observability: Use Run:ai's visibility tools to monitor GPU utilization, latency, and the effectiveness of your scheduling policies.

As AI models continue to grow and diversify, efficient resource management will be the key differentiator between cost-prohibitive and sustainable deployments. Mastering these scheduling primitives is the next step for any team running production LLMs.

Further Reading:

- Python Typing in 2025: 86% Adoption & The Challenges That Remain - For insights into another evolving area of developer tooling and best practices.